Data sharing is a critical part of ensuring a reproducible and robust research literature. It's also increasingly the law of the land, with new federal mandates taking effect in the US this year. How should psychologists and other behavioral scientists share their data?

Repositories should clearly be FAIR - findable, accessible, interoperable, and reusable. But here's the thing - most data on a FAIR repository like the Open Science Framework (which is great, btw), will never be reused. It's findable and accessible, but it's not really interoperable or reusable. The problem is that most psychological data are measurements of some stuff in some experimental context. The measures we use are all over the place. We do not standardize our measures, let alone our manipulations. The metadata are comprehensible but not machine readable. And there is no universal ontology that lets someone say "I want all the measurements of self-regulation on children that are posted on OSF."

What makes a dataset reusable really depends on the particular constructs that it measures, which in turn depends on the subfield and community those data are being collected for. When I want to reuse data, I don't want data in general. I want data about a specific construct, from a specific instrument, with metadata particular to my use field. Such should be stored in repositories specific to that measure, construct, or instrument. Let's call these Domain Specific Data Repositories (DSDRs). DSDRs are a way to make sure data actually are interoperable and actually do get reused by the target community.

Put data in DSDRs

Suppose I'm doing a project on executive function in early childhood. Wouldn't it be nice if I could download raw or aggregated data from the various tasks that people had used to measure executive function? Or suppose I'm now interested in complex sentence structure and psycholinguistics. Wouldn't it be nice to be able to download data from the hundreds of experiments on word-by-word reading time for sentences of different types? Data on both these questions exist, but they are spread out across repositories for individual papers, formatted differently in every case. Putting together more than one dataset is typically a nightmare of data harmonization and meta-data guesswork.

Neuroimaging folks get this. You don't post fMRI images to Zenodo or OSF or another repository of this type. You post them to OpenNeuro - a domain-specific repository for neuroimaging. fMRI data have specific standards for metadata and particular affordances in terms of preprocessing, aggregation, and analysis. OpenNeuro is designed around these ideas.

Similarly, the Child Language Data Exchange System (CHILDES) has known this basic fact for years. They established a common schema for transcripts of parent-child conversations (the CHAT standard). Now everyone in the field of child language posts their data to CHILDES in this format, and so when you want to learn about kids' use of the word "and", you can search every major transcribed corpus of child language in a single archive. My group has done the same kind of thing with data around children's vocabulary, with Wordbank archiving parent reports about child language from dozens of languages and tens of thousands of kids.

To make high-value, reusable datasets, it is critical to aggregate the data around a common data standard that is specific to a particular instrument or construct, and that connects with the agenda of a particular research community. These tools can even help catalyze research communities to work together around a shared agenda. They can also increase data quality by putting into place domain-specific quality controls.

We need more DSDRs

The trouble is, making these domain-specific repositories is expensive and complicated. We've now made four: Wordbank, childes-db, Peekbank, and Metalab. Each of them has their own web hosting framework (similar but different) as well as their own underlying database schema, visualization apps, and application programming interface (API) for downloading the data. Even though they are structurally similar, they are not the same, and each was made as a one-off.

As a result, we now struggle under the burden of maintaining and updating these repositories, and it's not likely we can do too many more without abandoning some of them. Every time one breaks, I get lots of email. Every year I have to beg RStudio (now Posit) for free licenses to keep our visualization interface going. And it goes without saying that there is no funding for long-term maintenance of such repositories.

But maybe we could automate and centralize the construction of such repositories and host them jointly in the cloud, rather than creating wholly separate resources each time.

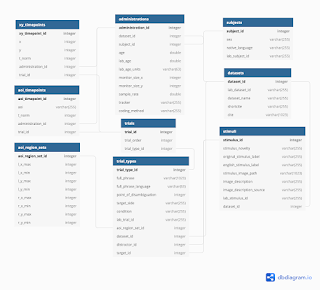

At the core of each repository is a database schema, like the schema for Peekbank:

Designing this kind of schema requires a clear understanding of the ontology for the kind of data you want to archive – it's surprisingly tricky (the Peekbank one took us many meetings over several years!). But once you have such a schema, it is straightforward to create an API to get data out of a database with this schema. And with a good API, it is surprisingly easy to define visualizations of data in the schema. People are often surprised that the interactive visualizations in something like Wordbank are the easy part!

The only pain point is importing new datasets into the schema – typically this work requires writing custom data-munging code for each dataset to define the relation between the incoming data format and the specific tables required in the schema. For Wordbank we even defined an intermediate abstract layer for defining the mapping between incoming data and our schema.

In principle, all of this work could be wrapped in a sufficiently general framework to make it unnecessary to create a custom hosting solution. Each database could be an instance of a broader database type, or even inside a giant wrapper database. And each API could be generated automatically from the database schema. You could even imagine a world where these DSDRs were created automatically out of an app like AirTable. There's some serious design work to do to describe the scope of such a system, but it is certainly not out of the realm of possibility.

Challenges

We have some work to do to make DSDRs like Wordbank the norm. At a minimum, we need:

- Credit assignment: robust norms for giving contributors credit when their data are used. At the moment, Wordbank and CHILDES simply ask folks to cite the contributors' paper (e.g., http://wordbank.stanford.edu/contributors) but in the long term, datasets should have DOIs that are downloaded with the data and associated to the paper DOI automagically.

- Dataset use tracking: repositories also need DOIs and methods for tracking their use and impact beyond citations of papers about the repository, which are often out of date and which split impact across multiple products.

- Effective data versioning solutions: we need easy tools for using historical snapshots of repositories so that analyses of DSDR data are reproducible. We have hand engineered this for some of our DSDRs, but we need to be able to roll out this functionality with limited extra effort. Right now some key repositories like CHILDES have no accessible version control, meaning analyses can break down the line and users will not know why.

- Mechanisms for ensuring the longevity of DSDRs: we need to ensure that DSDRs don't just rely on single investigators for maintenance and updates, perhaps through partnerships with libraries and cloud providers.

There's a lot to do.

Conclusion

Lost in many discussions of data sharing is that data shared in individual packages fosters reproducibility but often not interoperability and reuse. Reuse comes when data are organized around specific disciplinary constructs, frameworks, and measurements. And reuse value grows further as the size and diversity of the datasets in a domain-specific repository increase. We need more of domain-specific data repositories to catalyze research communities, especially in smaller fields where no such data resource exists. To create these, we will need new technical tools for rapidly and sustainably spinning up new repositories. These tools should be a development priority.

No comments:

Post a Comment